Saumil

Patel

Argonne National Laboratory

Building high-order numerical methods and GPU-accelerated solvers for multi-physics simulations at exascale — from turbulent combustion in engines to blood flow in arteries.

Building high-order numerical methods and GPU-accelerated solvers for multi-physics simulations at exascale — from turbulent combustion in engines to blood flow in arteries.

My research develops high-order numerical methods for multi-physics simulations at scales that were, until recently, impossible. Over twelve years at Argonne National Laboratory, I have built spectral-element and lattice Boltzmann solvers that run efficiently on tens of thousands of GPUs — from NVIDIA’s accelerators at the Argonne Leadership Computing Facility to the AMD-based Frontier exascale machine at Oak Ridge.

I work at the intersection of three frontiers: mathematically rigorous discretization, performance-portable GPU computing, and data-driven augmentation of classical solvers.

This means developing spectral-element and discontinuous Galerkin formulations with provable convergence properties, then engineering them for portable execution across heterogeneous architectures using CUDA, SYCL, HIP, and Kokkos. Most recently, I have been augmenting these solvers with graph neural networks for mesh-based super-resolution — enabling large-scale parametric studies that would otherwise require prohibitive compute time.

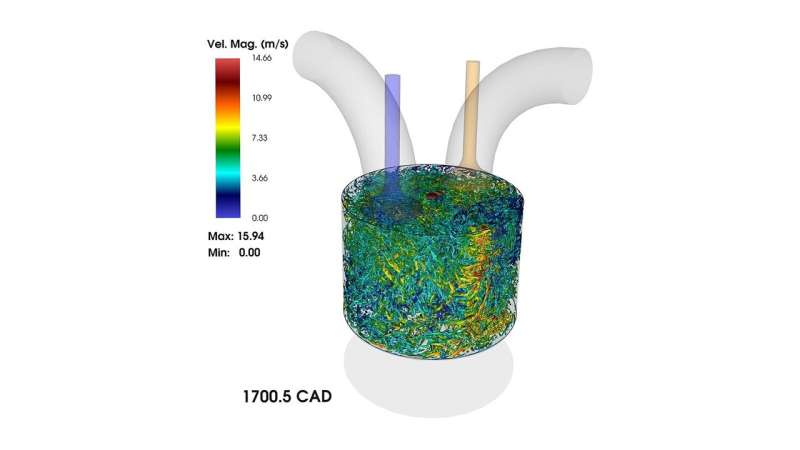

The applications span internal combustion engine design, where our simulations delivered a four-fold speedup on the largest-ever ICE flow calculation, to hemodynamic modeling for clinical decision support and conjugate heat transfer in next-generation energy systems. I am increasingly focused on how in-situ visualization and machine learning can transform these simulations from post-hoc analysis tools into real-time predictive instruments.

I am developing graph neural network surrogates that embed collision-streaming physics directly into the network architecture, trained on spectral-element LBM simulation data. This work combines my SE-DG-LBM solver, the IMEXLBM proxy application, and recent multiscale GNN research to create surrogates that run parametric sweeps orders of magnitude faster while preserving conservation laws.

Builds on the SE-DG-LBM solver (2014–15), IMEXLBM proxy app (2022), flux bounce-back scheme (2025), and multiscale GNN work (2025). Training data from natural convection annulus simulations; IMEXLBM enables fast GPU-based generation across parameter sweeps.

First surrogate model for SE-LBM on unstructured meshes. First to embed moment-space conservation and Chapman-Enskog consistency into GNN layers — combining deep expertise in both spectral-element lattice Boltzmann methods and distributed GNN-based surrogate modeling.

With the automotive industry’s pivot to hydrogen, I am extending my proven ICE simulation framework to model hydrogen direct-injection combustion at wall-resolved fidelity. The thin H₂ flame front is uniquely suited to spectral-element discretization, and DOE’s prioritization of hydrogen energy aligns with available exascale computing allocations on Aurora.

Builds on the ICE portfolio (2022 cyclic variability, 2024 spray dynamics), ALE/moving-domain work (2016, 2019), POD methodology, and the NekRS solver with ALE framework, ICE meshing strategies, and ALCF computing allocations.

Wall-resolved LES of hydrogen ICE is essentially unexplored at scale. Thin H₂ flame fronts are uniquely suited to spectral elements. Hydrogen engines are a top DOE priority, Aurora is online, and the tools, allocations, and track record are in place.

Building on the SENSEI-NekRS coupling framework recognized with the ISAV 2023 Best Paper Award, I am developing methods to transform in-situ visualization from passive monitoring into active computational steering. In hemodynamic simulations, real-time wall shear stress maps could automatically trigger spectral-element p-refinement where it matters clinically.

Builds on the SENSEI-NekRS coupling (ISAV 2023 Best Paper), GPU hemodynamic solver (SC23), spectral element p-refinement capability, and performance models from the SC23 benchmarking paper.

In-situ visualization has always been passive observation; this makes it an active steering mechanism. Directly clinically relevant — clinicians care about localized WSS at the aneurysm neck, and p-refinement targets exactly that without GPU load-balancing nightmares.

Applying the same conjugate heat transfer physics from my doctoral work to a new domain, I am investigating how distributed graph neural networks can enable real-time thermal management digital twins for electric vehicle motor cooling — predicting full thermal fields from sparse thermocouple data without resolution loss.

Builds on conjugate heat transfer expertise (SE-LBM, 2015), distributed GNN scaling, mesh super-resolution (2025), and GPU computing capabilities. Same physics, new geometry — the distributed GNN framework and multiscale architecture carry directly.

GNN preserves the full spatial field on the actual mesh with no POD-ROM resolution loss and handles complex geometries without retraining. The EV transition is the defining industrial shift, and DOE electrification priorities align with Argonne’s automotive portfolio.

Leveraging my experience porting solvers across NVIDIA, AMD, and Intel GPU architectures, I am conducting systematic benchmarks of the filtered spectral-element LBM across Frontier, Polaris, and Aurora. This would be the first cross-architecture study of any SE-LBM variant — ever.

Builds on the IMEXLBM proxy app (2022), flux bounce-back implementation (2025), multi-framework benchmarking methodology (SC23 hemodynamics paper), and ALCF machine allocations across all three vendors.

SE-LBM has fundamentally different GPU characteristics than standard LBM: high arithmetic intensity collision, memory-bound streaming, and filtering. Never studied cross-architecture. This is the fastest-track direction — a benchmarking study with existing codes that can proceed in parallel with longer-term projects.

I am exploring opportunities in computational science, HPC engineering, and faculty positions in the greater Philadelphia area, beginning Fall 2026. I welcome conversations about research collaboration, open positions, or shared interests in high-performance scientific computing.